Too often, we seem too focused on volume, velocity and activity levels, thinking that the more things we do, the better we are. We set goals for number of prospecting calls, numbers of meetings, and any number of things.

The thinking is, if we simply do more, we produce more.

On face value, that makes sense. Having 20 prospecting conversations a week is twice as good as having 10 prospecting conversations a week (assuming all else is equal).

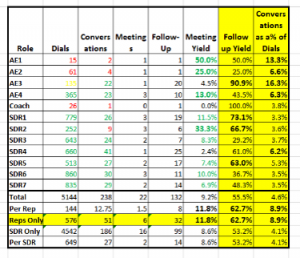

Chris Beall, of Connect and Sell is gracious in publishing data in this area. The data provides really interesting insights and questions for management on performance. I’ve taken some of his recent data, looking at it, but with a slightly different eye. Clearly, Chris’s premise is driven by volume and velocity. I don’t question that, but there’s more interesting information that drives effectiveness.

The data is fascinating. I’ve replicated Chris’s, the columns/rows in Yellow, as well as the bolded entries are mine. Chris’s data on volume/velocity is compelling. Each SDR, on average is making 4 times the dials the AEs are, producing a little more than twice the conversations, and 30% more meetings (on average). It’s easy to reach a conclusion that just dialing more produces more.

As sales managers, we want to maximize the performance–or yield each of our people produces. As we look at this data, there are some really interesting things that leap out–regarding effectiveness.

- The meeting yield per rep is 36.8% better than SDRs. What is it the reps are doing differently in those conversations? What can we learn about those conversations, getting all our people to do the same thing?

- The follow up yield per rep is 17.9% better than the SDRs. Again, the same questions arise.

- What’s really fascinating is the conversations per dial that reps make is 116% of the conversations per dial that the SDRs make. What drives such a great difference? Are the reps calling a different set of customers? What can we learn from this?

Clearly, we start seeing there while the absolute volumes of dials, conversations, etc that the reps make is much lower than those of the SDRs, their effectiveness is much higher. As managers, what we want to do is ask, “How do we get the effectiveness of the SDRs at the same level as the reps? The results we could produce are much better.

But there’s more we can we can learn from this data. AE1 seems to do things very differently than the others. The meetings per conversation are 441% better than the average of everyone. If this isn’t a fluke from the period in which this data is collected, what is AE1 doing differently, what can we learn and help our other people improve their effectiveness. AE1 also has a much higher conversation/dial ratio than the others. On average, 188% better. Is that AE calling a different customer profile? What is that AE doing that drives a larger number of conversations per dial than all the others? How can we apply that to the other AEs and SDRs?

As we start looking at the data, there are a lot more questions we want to ask—all focused on driving the highest levels of performance we can.

- What is the average duration of the calls? Are there significant differences between AEs, SDRs, what are the top performers doing?

- What’s the quality/outcome of these calls? Are there differences in levels of qualification? Are there differences in what was accomplished in the call. For example, if I make a call and manage, somehow, to get a meeting–but the customer isn’t really qualified, is meetings per call an appropriate measure. What I looked at calls to disqualify, resulting in a smaller number of high quality meetings?

- Again, looking at the quality and outcome of the calls? Was there a difference in what we talked about, what we learned, the level of engagement/buy-in from the customer, the commitments they may have made, beyond agreeing to a meeting/follow-up, or even the quality of the meeting/follow-up itself.

- What about the calls that didn’t produce meetings/follow-ups? Were those appropriate outcomes? Could we have done better, could we have improved the yield/outcomes? What might we learn to avoid even dialing those types of customers in the future, if they were the wrong calls?

- What is the total time spent calling? For example, did AE1 spend the whole day making those 15 dials and having 2 conversations? Did SDR6 spend the whole day making those 860 dials and having 30 conversations? What is the general productivity of the people?

We miss a lot when we look at data without really understanding the “why” that underlies the data. Too often, it leads us to conclusions that may, in fact be wrong. Or in the least, we overlook opportunities to improve performance across the organization.

Likewise, we can look at other anomalies in the performance. For example, SDR 4 had the most conversations (on a raw number basis) but produces only 1 meeting. Perhaps that SDR disqualified a lot of the customers, moving them into follow up. Or perhaps that person was not as effective in the conversation as possible. AE3 shows a similar pattern. What can we learn about individual performance to coach these people to improve?

Our goal as Front Line Sales Managers is to maximize the performance of each of our people. It is always a matter of assessing effectiveness and efficiency. When we fixate on one–we miss huge opportunities.

Generally, we want to focus first on effectiveness–maximizing the results we get from each dial, each conversation. Once we have mastered that then we work on efficiency–velocity and volumes.

Imagine the scenario if we could get the SDRs to match the effectiveness of the AEs. With the same number of dials, they might have had 216 more conversations—double what they achieved! With those conversations, they might have had 31 more meetings, more than 3 times what they achieved. Or with those conversations, they might have produced 252 follow-ups, about 2.5 times what they produced!

Granted, the jobs of AEs and SDRs is different, as is their experience. So we may not be able to match AE average performance, with SDR average performance, but if we look at how we close the gap, there is huge room for improvement.

As managers, we have to look at the whole picture. We have to constantly be looking at what’s working the best and how do we scale it. Effectiveness always comes first, then velocity.

Clearly, Chris may be onto something with his “living life at 1000 dials a day.” But could we be achieving more, more effectively and efficiently?